Architecting AI Culture

The AI-Driven Organization | Article 9 of 10

You can buy tools, mandate training, build the workflows, set the permissions, and roll out the policy documents, yet still fail to become an AI-Driven Organization.

Not because the tools are bad. Not because your people are resistant. But because you treated culture like a checkbox. As if it’s something to handle after the implementation is done.

Here’s the thing about culture. It isn’t downstream of strategy. It doesn’t follow the plan. Culture is the plan and if you don’t build it intentionally, AI adoption will expose every crack in the foundation you didn’t know was there.

The Tool Trap

Most organizations focus on the wrong thing when they roll out AI. They spend a lot of time debating which tools to use, negotiating licenses, and governing the differrent tools. All of that is necessary, but none of this will change the culture..

I’ve watched organizations spend significant time and resources getting people to use AI and then discover, six months in, that usage is shallow. People are using AI to do what they already were already doing, just marginally faster. Fundamentally, nobody is working differently. Nobody is asking different questions. Nobody is thinking at a different altitude.

The tool didn’t fail. The culture did.

When AI adoption happens in a culture of scarcity — where sharing information feels like giving away power, where failure gets penalized instead of processed, where “that’s not how we do it here” is the invisible fence around every new idea — AI just accelerates the existing patterns. It doesn’t transform them.

Culture shapes how the tools get used and culture is shaped by leadership, long before any tool gets deployed.

What AI Culture Actually Looks Like

An AI-ready culture isn’t a culture that loves technology. Technology-loving cultures can be just as slow to change as technology-skeptical ones. They’re just slower for different reasons.

An AI-ready culture is one where:

Experimentation is expected, not exceptional. People try things. They share what worked. They share what didn’t. Failure isn’t a verdict, it’s learning.

Learning is structural, not aspirational. There’s actually time and space for it. Not just a Slack or Teams channel nobody reads, but regular moments where teams reflect on what they’re discovering.

Information moves. Insights don’t stay siloed in the person who found them. Knowledge flows across teams. What one person learns, others can access.

Questions are celebrated. The person who asks “why are we doing it this way?” isn’t a problem. They’re an asset.

Status doesn’t protect bad processes. Seniority doesn’t exempt anyone from scrutiny. If the workflow is broken, it’s broken regardless of who designed it.

None of these are AI-specific. They’re just healthy principles for organizational culture, but specfiically, they’re the soil in which AI actually grows.

In an organizational culture without these qualities, AI becomes one more tool people learn to work around rather than with.

The Leader’s Role

It often gets missed, but culture doesn’t change through communication or policy. Culture changes through shared behavior.

You can send the memo, add the AI vision to the strategic plan, and can call out things like “embrace innovation” in the core values document, but none of this really matters if the leaders do not embrace it.

Organizationally, nothing matters as much as what leaders do in the moments when culture is actually being formed. The meeting where someone suggests a new approach and the leader’s first response is, “We tried something like that five years ago and it didn’t work.” The debrief where a failed experiment gets treated as a performance problem rather than a learning opportunity. Unfortunately, the innovation or experimentation budget is often the first thing cut when resources get tight.

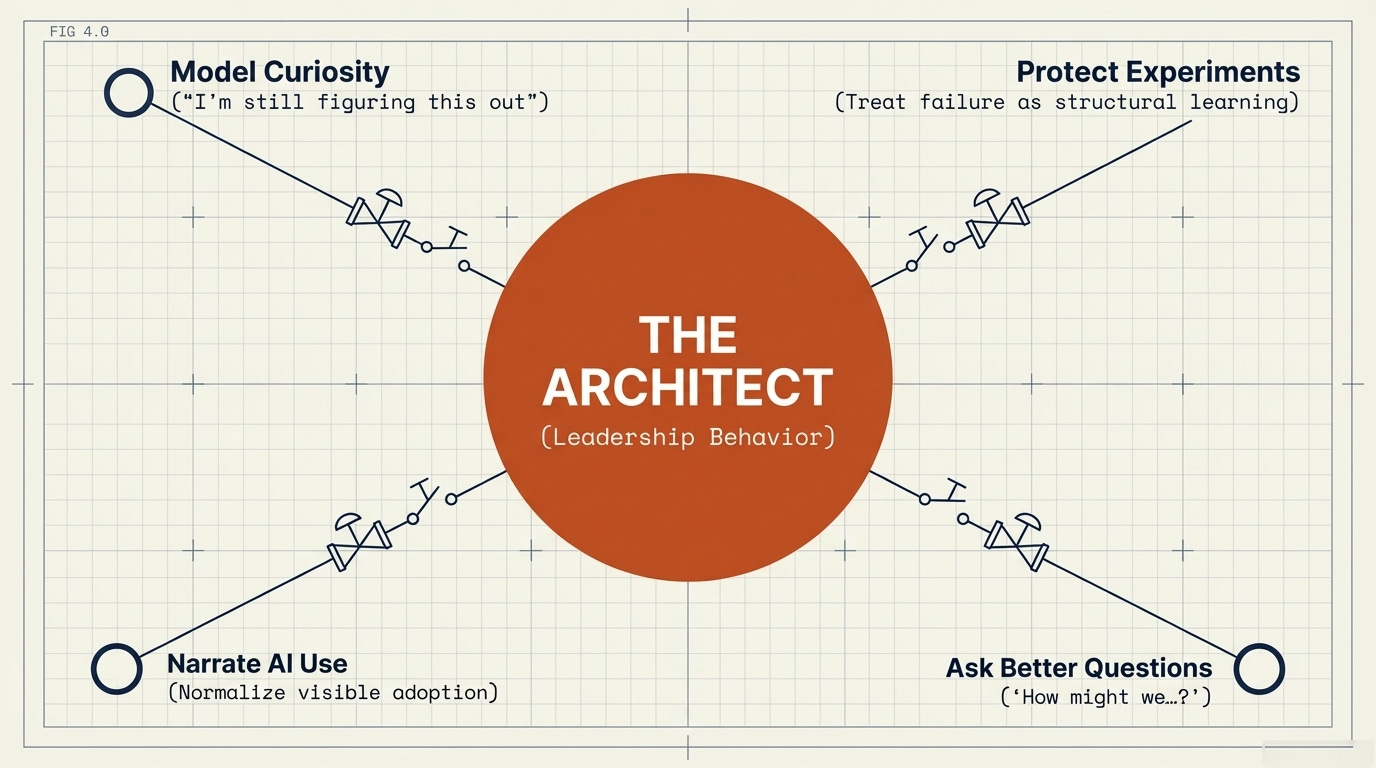

Those moments shape culture more than any town hall, memorandum, or policies. Leaders who want to build AI-ready cultures must to lead differently. Not just talk differently. Lead differently. That means:

Model curiosity over certainty. If you are a leader, try to be seen exploring, not just directing. Saying “I don’t know yet, I’m still figuring this out” in public.

Protecting experiments. Create genuine space for teams to try things that might not work. Don’t penalizing these ideas when something doesn’t. Ask questions like, “What did we learn from this?”

Ask better questions. Don’t ask things like, “Why isn’t adoption higher?” Instead ask, “What’s making it hard to try new things here?” One of the best question you can ask is, “How might we ________?”

Narrate your own AI use. If you’re using AI to think, to draft, to stress-test your decisions, let people know that. Say it often and in spaces where others can year it. Visibible embracing and using AI from leadership normalizes the behavior in other teams.

The organizations I see getting AI right have leaders who are doing the work themselves. They are not just supporting from a distance. They are using the very tools they are advocating for. They are not delegating adoption to others while remaining personally untouched by it.

Culture that Quietly Kill AI Adoption

Organizatinos have landmines they don’t always know about.

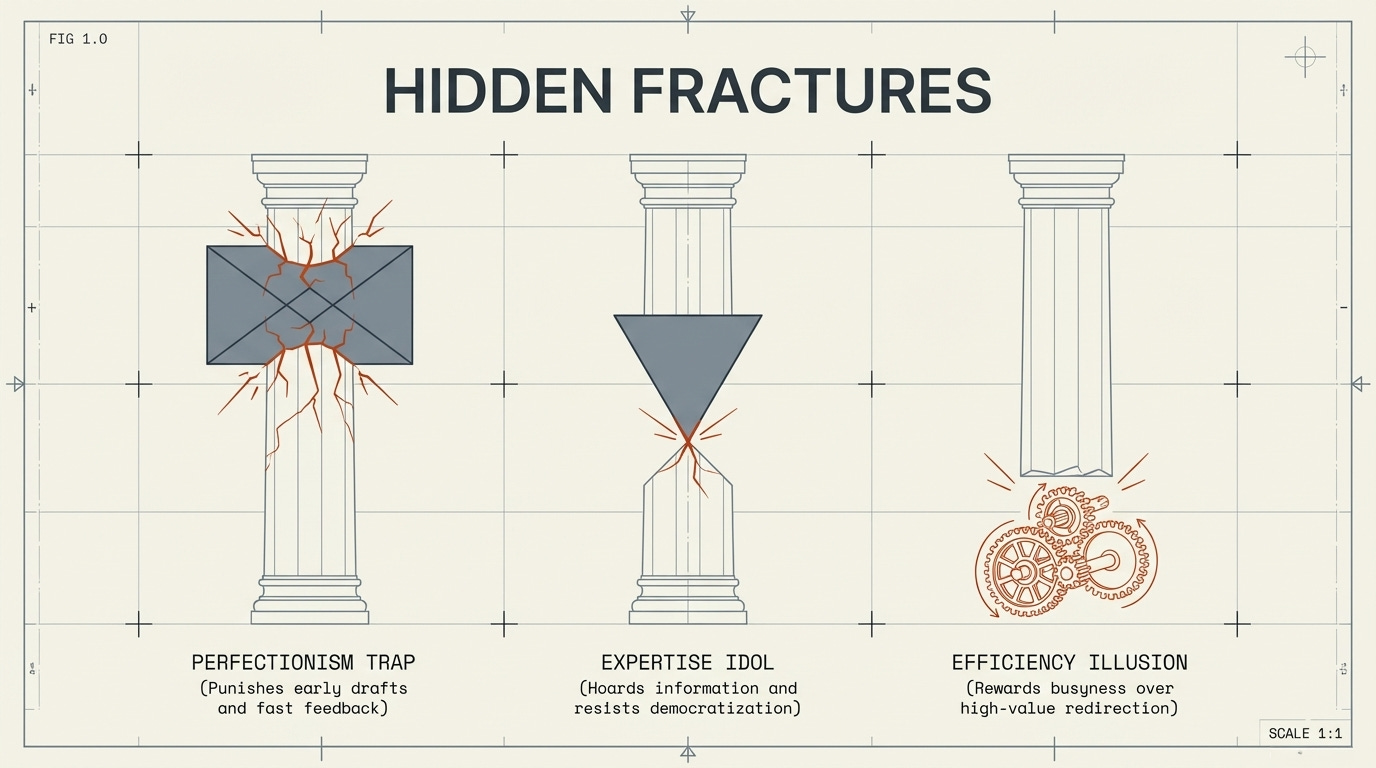

The Perfectionism Trap

In cultures where everything must be completely polished and a bow on it before it gets shared, AI can’t do its best work. If you expect AI to give perfect solutions on every first attempt, you will be disappointed. AI is iterative by nature. It gets better with fast feedback. If the culture punishes showing early drafts or rough thinking, people will only bring AI output when it’s already been sanitized. This defeats the point.

The Expertise Idol

In some organizations, expertise is identity. The person who knows the most holds the most authority. AI threatens this dynamic because it distributes access to information and analysis more broadly. In cultures organized around expertise as status, AI not only doesn’t get adopted, it gets resisted. Not loudly, but quietly by the people who have the most to lose. AI is the great democratizer of information and solutions.

The Efficiency Illusion

Some cultures reward looking busy over doing important work. AI can free up significant capacity, but if that freed-up capacity doesn’t get redirected toward higher-value activity, if it just gets filled with more meetings and more reports. The organization never captures the real benefit and culture determines what people do with margin when they get it. Read The AI Dividend for more on this.

Connecting It Back to the Mission

I want to be clear about why this matters, because it’s easy to let the idea of an AI-Driven Organization to stay in the abstract. You need to move from abstract ideas to concrete organizational action.

We are in a moment of unprecedented access to tools that could dramatically expand the reach and effectiveness of gospel work among unreached peoples. AI can accelerate research. It can multiply the capacity of field workers. It can reduce the administrative burden that pulls people away from relational ministry. It can help small teams punch far above their weight class.

All of these possibilities can only happen if the culture is ready for it. Maybe it’s better said, if the organizational culture intentionally embraces AI.

If we build the AI tool stack without building the culture, we will squander one of the most significant organizational opportunities the global missions movement has ever had. Not because we were opposed to it, because we didn’t do the slower, harder work of asking what kind of organization we actually need to be.

The tools are not the hard part, it’s the culture is the hard part.

A Few Places to Start

If you’re leading a team or an organization navigating AI and have a desire to truly become an AI-Driven Organization, here’s some suggestions of where to put your energy:

Audit your failure culture. When something doesn’t work, what actually happens? Be honest. Not what’s supposed to happen. What actually happens.

Create visible experiments. Pick something where the stakes are low. Try an AI solution, share the process openly (messy parts included), and make experimentation visible and normal.

Reward questions more than answers. Specifically, and publicly. The person who asks, “How might we?” is often more valuable than the person who confidently executes the same old thing without asking questions.

Identify the quiet resisters. Every AI adoption has them. These are the people whose identity, authority, or comfort is threatened by change. Don’t steamroll them, engage them. Sometimes the loudest critic becomes the most powerful champion once they’re genuinely included and reassured.

Connect every AI initiative back to the mission. Not efficiency, but truly the mission of your organization. The question isn’t, “How does this save time or money?” It’s actually, “How does this advance our stated mission and purpose?” When the mission is the frame, the culture conversation gets traction. You measure success of your AI-Driven Organization by how it impacts your mission.

Remember, the AI tool stack is the easy part. You can buy it, configure it, deploy it, and even change it in a matter of weeks.

Establishing your organization culture takes years. Building an AI-Driven Organization only occurs if it builds upon the overall organizational culture. If your organization is risk averse and change averse, don’t expect AI to flourish quickly.

Organizations that build the tools and the culture, will find themselves operating at a level of mission effectiveness that wasn’t possible before.

That’s what all of this has been about. AI-Driven Organizations are not just looking at efficiency. They are focused upon effectiveness. Specifically, they are focusing upon effectiveness that advances the bottom line of the purpose of the organization. They measure this impact.

The question isn’t whether you’ll adopt AI. The question is whether you’ll build the kind of organization that can actually use it well.