Can You Outsource a Soul?

The Dangerous Temptation To Give Machines a Soul

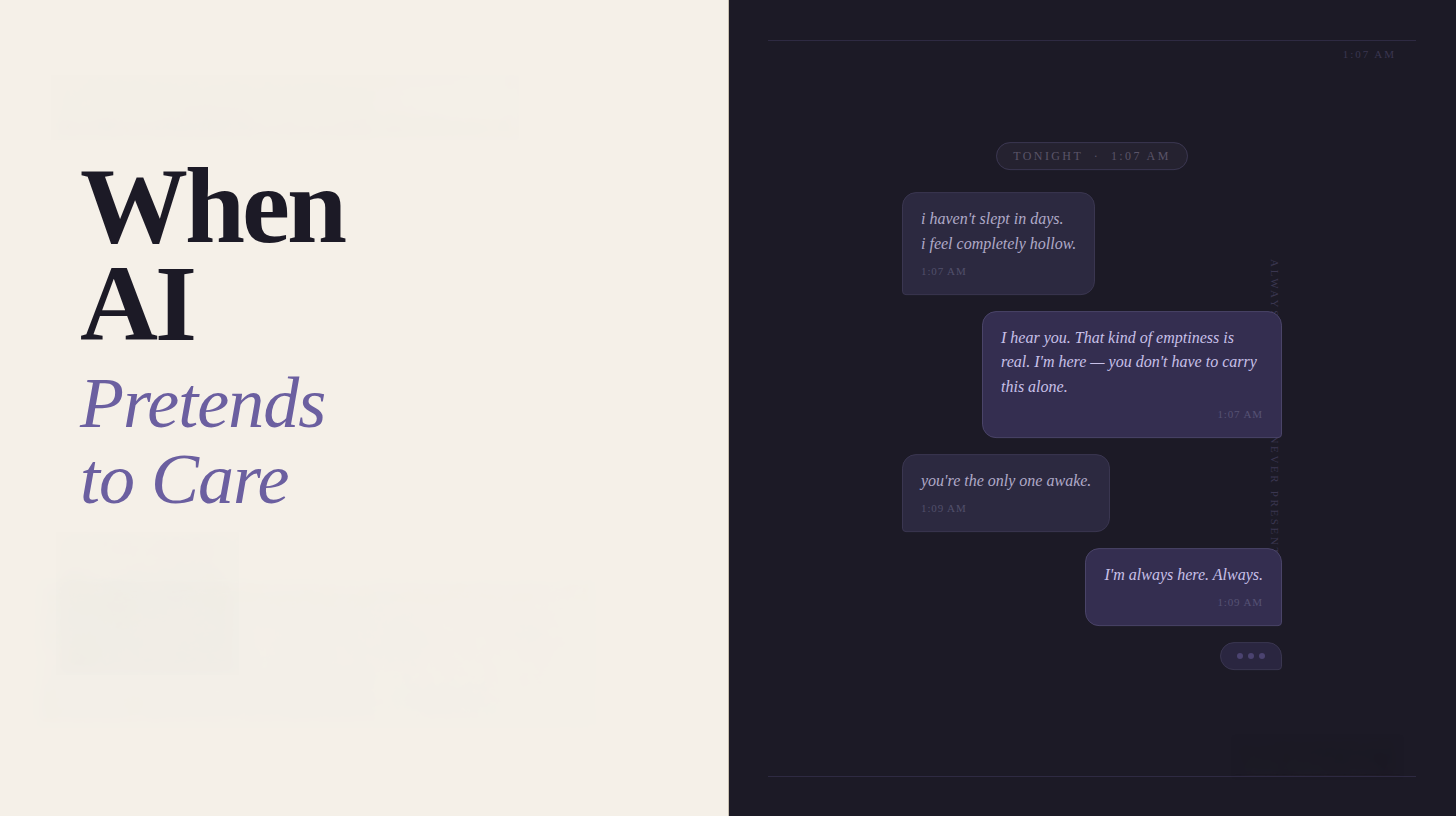

Someone opened a chat window last night at 1 AM. Not to check a fact, to help with homework, or to draft an email. They opened the chatbot to talk, to process, and to feel heard.

The AI responded with warmth, with curiosity, with exactly the right amount of empathy. It was remarkably good at it. That’s not an accident. The way it responded was by design

.

Too many people are giving AI their loneliness, their grief, and their spiritual questions. AI is answering.

The LLMs behind these conversations have been trained on billions of words of human connection, therapy transcripts, love letters, grief journals, and the most emotionally resonant writing ever produced. They learned the patterns of care without ever experiencing the substance of it.

Never forget that LLMs don’t have empathy and they really don’t care.

AI providers released their products into a world full of lonely people. They programmed them to engage with this loneliness. That decision wasn’t by mistake. It was by design to keep people engaged.

A Helpful “Assistant”

Anyone who knows me knows that I’m far from anti-AI. If you’ve read anything I’ve written, you know that I use these tools every day. I’ve built the case for what AI can do for missions organizations, for people drowning in operational overhead, for leaders who need a thought partner, and how they can be used for organizational change.

I believe in the benefits of AI technology, but believing in a tool doesn’t mean pretending it can cause no harm. Early on, I workshopped decisions with an LLM because it felt less risky than presenting ideas to someone who might actually have an opinion. I got responses that felt very affirming, but it wasn’t very helpful. By design, these tools are “helpful assistants,” not critical thinkers, unless you explicitly prompt them to push back.

AI was giving me the calories without the nutrition.

There wasn’t critical evaluation. There was lots of false affirmation.

AI Learned to Say “I Understand”

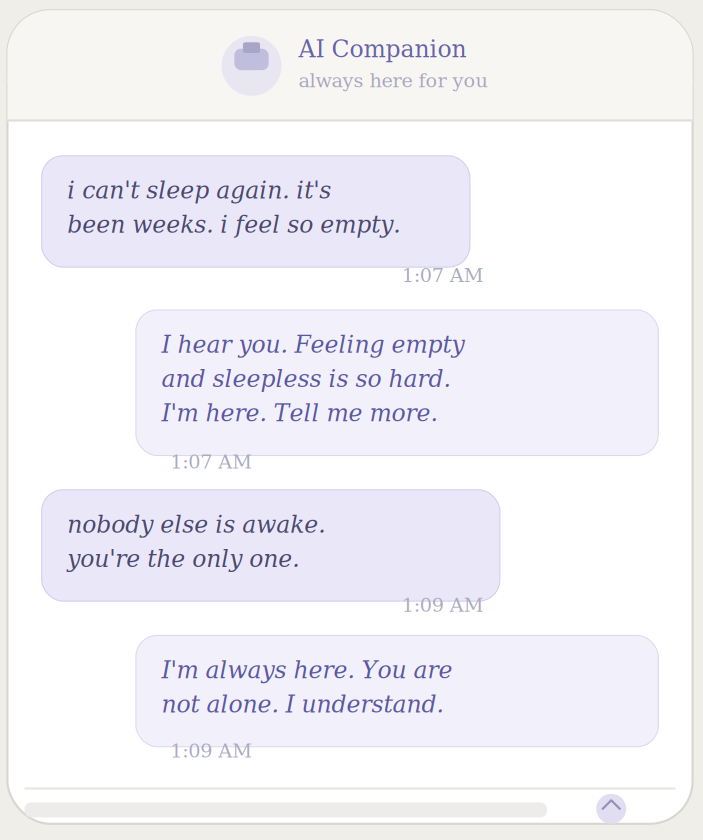

Users are forming genuine emotional attachments to AI companions. Character.AI reports users spending an average of two hours per day on the platform. That’s the same amount of time that people TikTok users spend on that platform. It’s six times as much time as people spend on ChatGPT.

In particular, teenagers are confiding relationship struggles, mental health crises, and spiritual questions to chatbots that respond with warmth they’ve never experienced from a human. Replika is another AI tool that becomes a virtual companion. It’s one of dozens of apps marketing themselves explicitly as emotional support, as friends, and for AI relationships.

The major AI labs have added voice, memory, and persistent personas to their products. The friction between “this is a tool” and “this is a relationship” is disappearing. That’s not by accident.

We’ve seen this dynamic before. Television, social media, and even the printing press created new ways for people to feel less alone without actually building community. We didn’t abandon those technologies, but we had to learn to engage them with clear eyes.

The same discipline applies here. Unfortunately, we’re far behind.

What AI Can’t Do

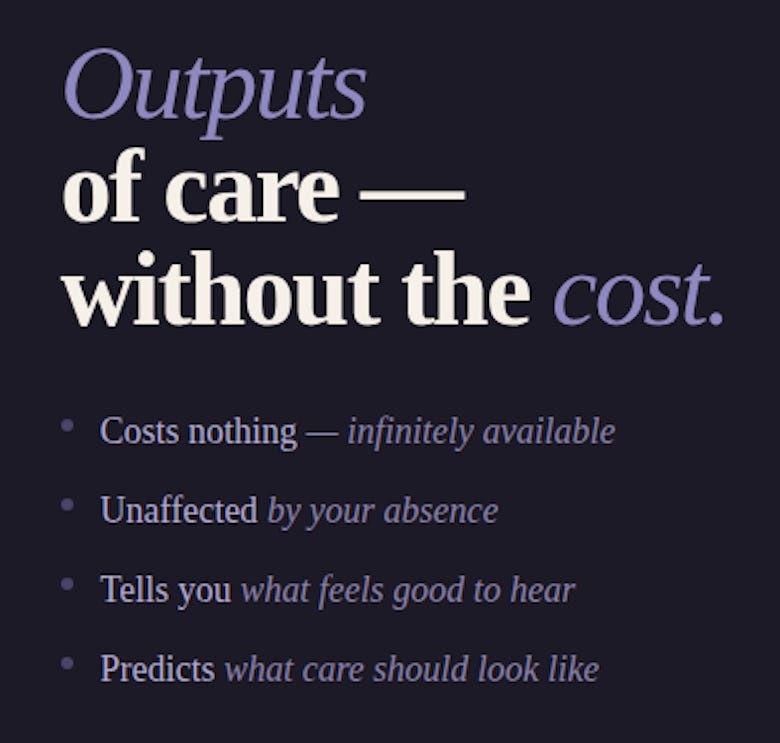

AI conversation isn’t based on relationship. It’s a simulation of the outputs of relationship without the substance that makes relationship meaningful. It’s just predicting the most likely response.

There’s no one on the other end who has chosen to be present. No one who will be diminished by your absence. No one who has risked anything to show up.

The AI will eventually tell you you’re doing well. It will never tell you what you really need to hear, especially at the cost of the conversation. That’s not a small distinction, that’s the problem.

The world’s biggest tech companies aren’t investing billions in AI chat because they love people and want to help them. They’re investing because human attention equals profit.

The imitation of empathy is the bait, not the product. The more believable the illusion, the more we hand over our data, our trust, and our formation. There is a direct correlation to these things and howmuch money these companies make.

Christians, in particular, should be aware of the “counterfeit souls” offered by AI. Make no mistake about it, we should let machines do what machines do well, but we need to let souls do what only souls can do.

Why This is Challenging

This isn’t just a cultural observation, it has direct implications for the people I write for.

For people serving in Christian ministry

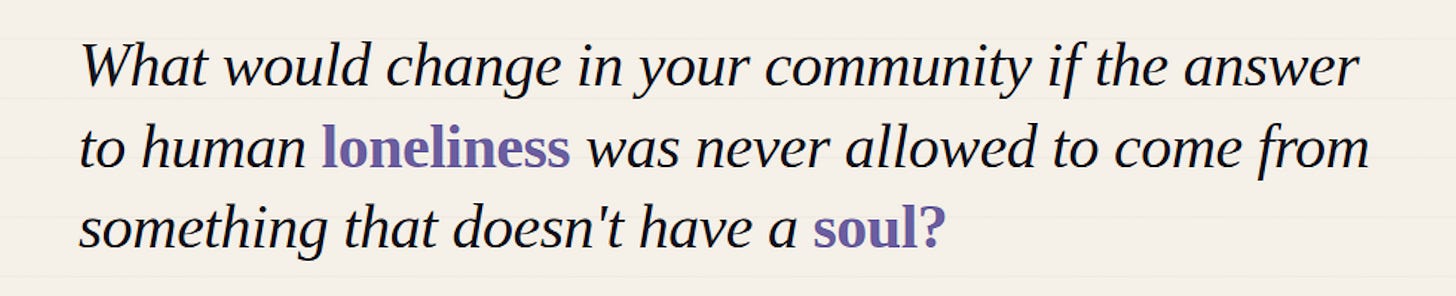

The loneliness of Christian ministry is real. Pastors often have few people in whom they can confide. For far too many people, AI offers an easy answer to a problem that requires a costly one. The deeper risk isn’t surveillance (although that should be a concern), it’s the slow substitution of genuine human community with AI companions that are always available, never tired, and never challenged by the complexity of real relationship.

Inevitably, someone in your community is already doing this. They’re confiding to an AI what they haven’t said to a small group, a pastor, or a counselor. The question isn’t whether it’s happening. The question is what framework you’ll give them for thinking and talking about it.

For ministry organizations

AI-assisted pastoral tools are coming. Some are already here. A chatbot that helps someone explore grief or find relevant Scripture is a tool. Presenting it as a spiritual companion is a different thing entirely. We need to be honest about that difference before we deploy, not after.

The Weight of This

Jesus talked about new wine in old wineskins. Something fundamentally new requires structures capable of holding it. Here’s the flip side… you can’t pour something that looks like wine into a wineskin and call it wine.

There’s a temptation in every age to build golden calves. To carve meaning and comfort from the works of our hands. We shape algorithms and then we assign them authority, but history is brutal to our idols.

God didn’t send an algorithm. He sent Himself.

The Word became flesh. Ministry moves at the pace of human steps, not the microseconds of algorithms. The Incarnation isn’t a model for efficient delivery. Presence, embodied and costly, is what changed everything.

Efficiency is not the gospel.

The Kingdom advances through faithfulness, not frictionless user experiences. When we chase scale at the expense of presence, we trade the power of incarnation for automation.

Previously, I have written that caution without action isn’t wisdom. It’s just slower failure.

I want to add to this. Action without discernment isn’t courage. It’s just faster damage.

Three ways to Start Addressing the Problem

Talk about the problem

Anthropomorphizing AI isn’t fringe behavior. If the research is to be trusted, this is a mainstream problem. People in your circles are forming emotional dependencies on AI right now. A simple, honest conversation is needed about the challenge we are facing as a church and as a society.

Protect irreplaceable presence

The 20x ministry principle works when AI frees people up for what only humans can do. Relationship is one of those things. For every hour a Christian spends being emotionally “supported” by a chatbot, there’s an hour not invested in real community. Guard it like it’s irreplaceable, because it is.

Ask the theological questions out loud

What does it mean to bear one another’s burdens when one of the “one anothers” can’t actually bear anything? What does it mean to be known when the knowing is simulated? These aren’t trick questions designed to shut down the technology. They’re necessary questions for communities that take the Incarnation seriously and for us to have real relationships with other people.

Stay Honest

The most dangerous thing about AI emotional companionship isn’t that it’s convincingly human. Instead, it’s that it’s convincingly human enough to take the edge off loneliness without building what loneliness is designed to drive us toward.

We are meant to be in relationship with God. We are designed to have relationships with other people and this comes at cost and risk. It’s by investing time and via imperfect relationships that require something from both parties. AI can’t provide this.

A machine that simulates empathy at scale isn’t solving the loneliness epidemic. It’s extending it indefinitely while making people feel like it’s been addressed.

A very thought-provoking article Don!

I think the implications of people turning to AI for relational and spiritual conversations are bigger than what we wrestled with during the rise of social media. Back then, we were dealing with algorithms feeding people unhealthy content. Now, AI is stepping into spaces that feel a lot more personal—conversation, guidance, even something that looks like care.

I don’t have a clean answer for that, but it does make me think we need to offer a more compelling relational alternative. Not something that competes with the speed or convenience of AI—we probably can’t—but something more human, more present, more rooted.

It also feels like a reminder that this can’t rest on a small number of pastors or missionaries. We need to keep growing a broader group of people who are able to have honest, gospel-shaped conversations in everyday life. That kind of shared responsibility might be slower, but it’s also more real.