Scaling the CRIT Framework

The AI-Driven Organization | Article 4 of 10

Every organization has a decision-making problem they don’t know they have.

It’s not that decisions don’t get made. They do. Constantly. Proposals get approved. Strategies get greenlit. Initiatives get launched. Resources get allocated. The calendar fills up, the budget gets divided, and the org moves forward.

The problem is what doesn’t happen before any of that.

Oftentimes, nobody challenges the assumptions underneath the decision. Nobody asks the uncomfortable questions. Nobody stress-tests the thinking before it becomes a commitment. And by the time the cracks show, maybe six months in, a year in, and often longer, the organization has already built something on a foundation nobody bothered to inspect.

This is not a failure of intelligence. It’s a failure of process.

And it’s exactly the problem the CRIT framework is designed to solve.

Geoff Woods introduces CRIT in The AI-Driven Leader as a way for individual leaders to use AI to sharpen their thinking before making decisions. The acronym stands for:

Challenging assumptions

Reducing bias

Improving strategy

Testing ideas

It’s a simple, repeatable way to use AI as a thought partner, not to make decisions for you, but to make your decisions better.

It’s a great framework for leaders. But applied at the organizational level, it becomes something more powerful.

Imagine an entire organization that builds assumption-challenging into its DNA. Not as a one-time exercise. Not as a quarterly review. But as the standard way proposals get developed, strategies get formed, and priorities get set.

That’s the shift I’m writing about today.

Let me break down what CRIT looks like when it scales beyond the individual leader.

Challenging Assumptions

Challenging Assumptions at the org level means building a culture where no proposal goes forward without someone, or something, pushing back on its foundations. The question isn’t “Is this a good idea?” It’s “What are we assuming has to be true for this to work?”

Most organizational assumptions are invisible. They’re baked into the proposal before anyone sits down to write it. The market will respond this way. The team has the capacity. The timing is right. The audience will engage. These assumptions are rarely named because naming them feels like doubting the idea. In many organizational cultures, doubt is unwelcome.

AI changes that dynamic. When you ask AI to surface your assumptions, it’s not personal. It’s process. And that matters enormously in organizations where interpersonal dynamics often suppress honest challenge.

Reducing Bias

Reducing Bias at the org level is where things get really interesting and a little uncomfortable. Every organization has a bias profile.

Confirmation bias: We find evidence for what we already believe. Sunken cost bias — we keep funding things we’ve already invested in.

Authority bias: We defer to the most senior person in the room.

Optimism bias: We consistently underestimate how hard things will be.

These biases don’t disappear because smart people are in the room. In many cases, smart people are better at rationalizing their biases. AI doesn’t have a stake in the outcome. It doesn’t care about the org chart. It doesn’t remember who championed this initiative two years ago. It just responds to the logic and that’s exactly the kind of dispassionate challenge most organizations desperately need.

Improving Strategy

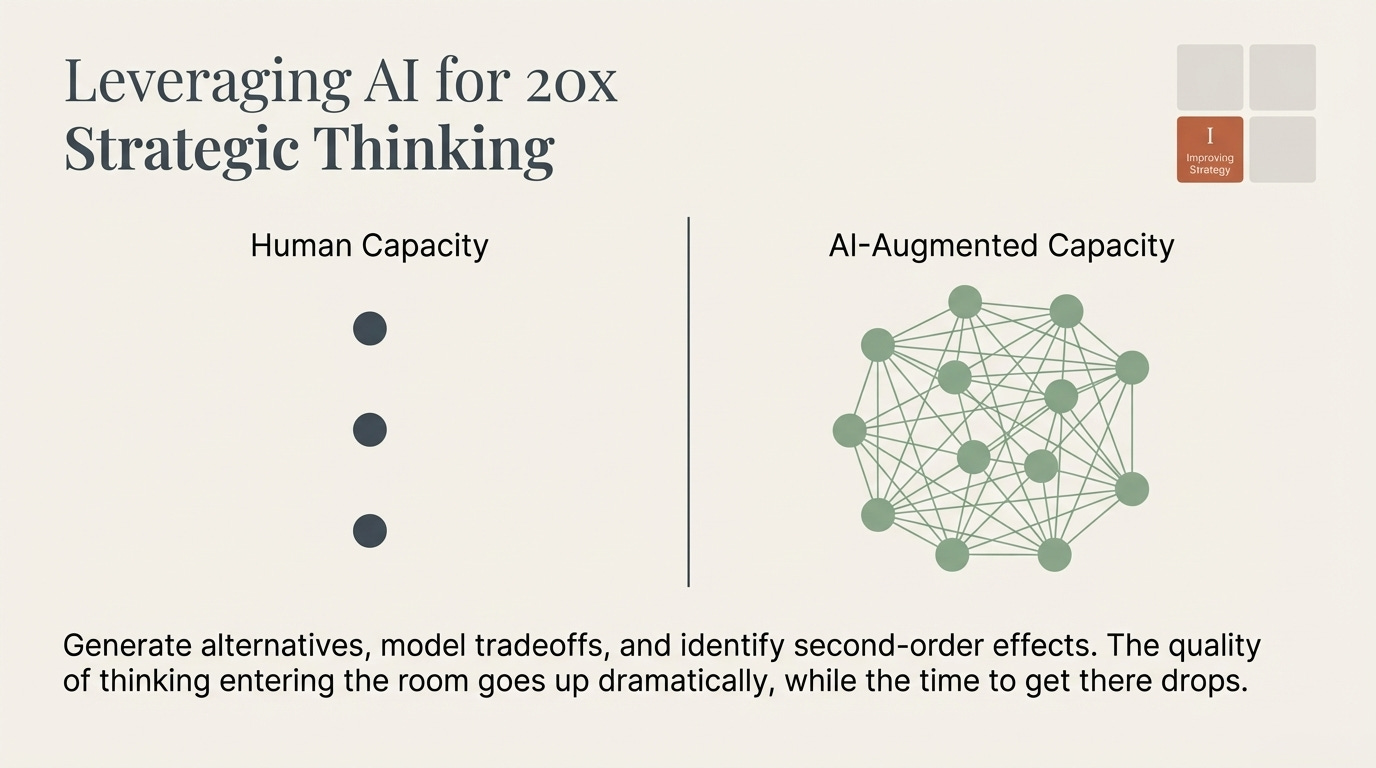

Improving Strategy means using AI not just to validate the strategy you have, but to generate alternatives you haven’t considered. This is where the 20x thinking kicks in hard.

A small strategy team with AI can now explore ten versions of a plan where they previously could only develop two or three. They can run scenarios, model tradeoffs, identify second-order effects, and pressure-test options. That’s all before presenting the idea to anyone else. The quality of the thinking that enters the room goes up dramatically and the time it takes to get there goes down exponentially.

Testing Ideas

Testing Ideasbefore they become initiatives is perhaps the highest-value application of the framework for faith-based organizations. Before you deploy a team, before you launch a program, before you build the tool, and before you hire new headcount, ask AI to be ruthlessly honest about where your idea is weakest.

Not to kill the idea, but to save it!

Here’s the challenge with scaling CRIT across an organization.

It requires leaders who are genuinely secure enough to have their thinking challenged. This happens before decisions are finalized. That’s a culture requirement, not a technology requirement. You can deploy AI tools across every team tomorrow and still have an organization where nobody uses them to challenge assumptions because the culture hasn’t given them permission.

This is why I keep coming back to the culture piece. The CRIT framework doesn’t work in an organization where challenge is unwelcome, where the leader’s instinct is always the starting point, or where “we’ve always done it this way” is a legitimate answer to a strategic question.

The technology is the easy part. Building a culture that actually wants to be challenged is where the hard work begins.

Practical Challenge:

Today, let’s start small. Pick one decision-making process. It can be a proposal template, a strategy review, an annual planning cycle, or something else and build CRIT into it explicitly.

Before any major proposal goes forward, require that it include a section called “Assumptions and Challenges.”

What assumptions does this proposal rest on?What did we ask AI to challenge?What came back, and how did we respond to it?

That single change will do more for your organization’s decision quality than almost anything else you could implement. It creates accountability for honest thinking. It surfaces the invisible foundations. And it normalizes the idea that challenge isn’t a threa, it’s how organizations make good decisions.

The AI-driven organization doesn’t just use AI to work faster. It uses AI to think more honestly. And honest thinking, at scale, is what separates organizations that build well from organizations that keep rebuilding the same thing over and over.

Article 4 of 10 in “The AI-Driven Organization” series. Next up: Multiplying Your People — what the 10x employee actually looks like, and how to build a team that thrives with AI rather than fears it.

“It requires leaders who are genuinely secure enough to have their thinking challenged.”

Man, that is spot on. Even leaving the AI discussion aside, insecure leaders are team killers.